|

7/1/2023 0 Comments Caffe finetune googlenetWith this Cython approach, I am now able to harness the good CNN inferencing performance of the Jetson’s.Īs always, I spent a lot of time developing this code. I was also a little bit frustrated that NVIDIA did not make TensoRT’s Python API available for the Jetson platforms. I used to find NVIDIA’s documentations and sample code for TensorRT not very easy to follow. Comparing the TensorRT result on Jetson TX2 (140 FPS), Jetson Nano (60 FPS) did pretty OK in this case… Platform Again, I tested inferencing using either caffe or TensorRT. I also tested this TensorRT GoogLeNet code on Jetson TX2. (Note the numbers are all for Jetson Nano.) Model I extract some numbers from that blog post and make the comparison table below. The closest thing I was able to find is this NVIDIA Developer Blog, Jetson Nano Brings AI Computing to Everyone, by Dustin Franklin. I tried to find some official benchmark numbers to verify my own test result.

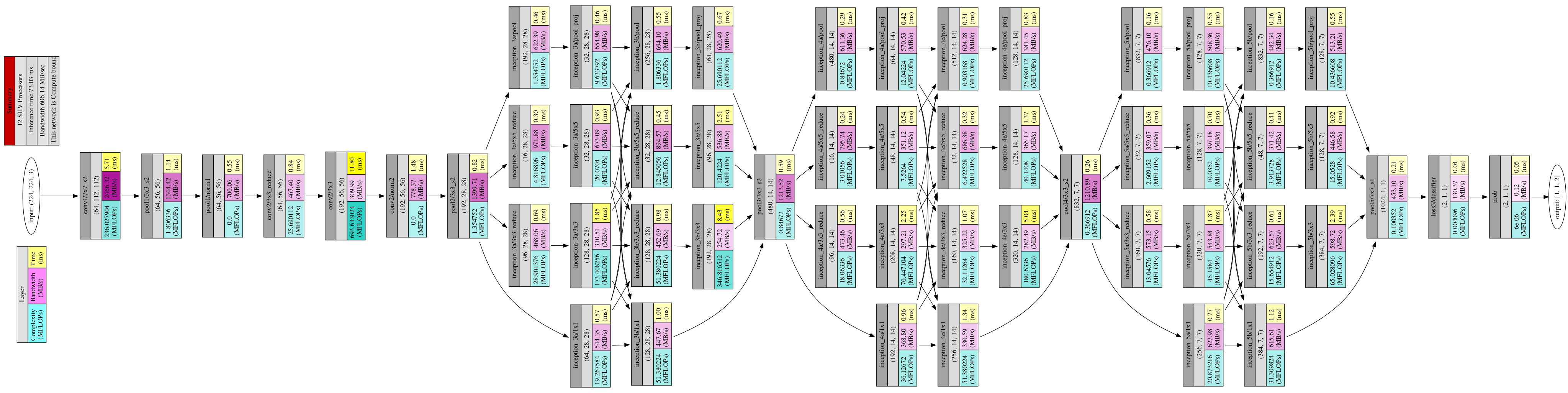

So if the video processing pipeline is done properly, we could achieve ~60FPS with this model on the Nano. Running this TensorRT optimized GoogLeNet model, Jetson Nano was able to classify images at a rate of ~16ms per frame. RESIZED_SHAPE: for resizing of input images from camera this should match ENGINE_SHAPE0.ENGINE_SHAPE1: shape of the output prob blob you need to modify it ifoutput of your model is not 1,000 classes.ENGINE_SHAPE0: shape of the input data blob you need to modify it if your model is using a input tensor shape other than 3x224x224.DEPLOY_ENGINE: name of the TensorRT engine file used for inferencing.In trt_googlenet.py, modify the following global variables:.Also replace synset_words.txt so that the list of class names match your model. Replace the deploy.prototxt and deploy.caffemodel with your own model. Make a copy of the googlenet/ directory and rename it.If you’d like to adapt my TensorRT GoogLeNet code to your own caffe classification model, you probably only need to make the following changes: For more details, please refer to Cython’s Documentations. I used Cython to wrap TensorRT C++ code, so that I could call them from python. TensorRT Python API is not available on the Jetson platforms. Full C++ source of this sample code could be found at /usr/src/tensorrt/samples/sampleGoogleNet on the Jetson Nano DevKit. I developed my C++ code in this demo mainly by referencing TensorRT’s official GoogLeNet sample. $ /project/tensorrt_demos/googlenet/deploy.prototxt When I tested it with a USB webcam (aiming at a picture shown on my Samsung tablet), I was able to see the picture classified correctly by the TensorRT GoogLeNet as: 1.

In short, you need to make sure you have TensorRT and OpenCV properly installed on the Jetson Nano, then you just clone the code from my GitHub repository and do a couple of make’s. Please refer to README.md in my jkjung-avt/tensorrt_demos repository for the steps to set up and run the trt_googlenet.py program.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed